The AI Stack Operators Actually Use: Why Chasing the “Best” Tool Is a Waste

Discover how startups and operators can cut through AI hype, choose the right tools, and build a practical stack that actually gets work done.

TECH TOOLS

Alexander Pau

4/5/20264 min read

Intro

I’ve written about surviving tool sprawl, making dashboards that people actually use (from dashboards to decisions), and aligning AI projects to real business goals (how to align AI projects). One lesson keeps repeating: tools don’t get work done, your habits and workflow do.

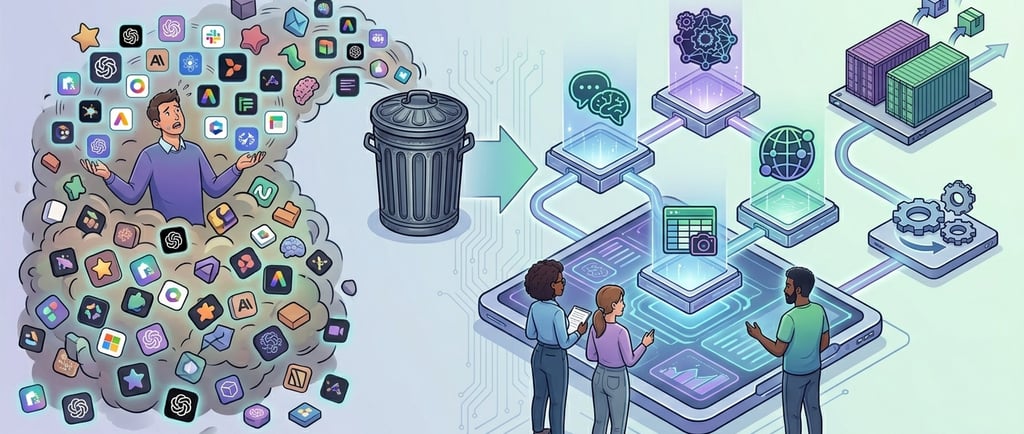

With AI tools, the same truth plays out at scale. Ask most people which AI tool is “best” and you will get a laundry list of names. But “best” misses the point. Operators ask which tool fits the work, fits the stack, and fits the habit. The right answer is rarely a single product. It’s a stack chosen to solve real problems day after day and integrated so it doesn’t get in the way.

Why the “Best Tool” Myth Persists

Most people chase what’s hyped. A blog says “this one’s better.” A viral thread showcases a demo. Before you know it, the tool becomes a supposed silver bullet.

That rarely works in practice. Chasing “the best” often leads to wasted hours, fragmented workflows, and team frustration. Tools are amplifiers of habits and processes, not replacements. In tool sprawl I showed how more tools can mean less clarity. Same here: more AI tools mean more context switching, more confusion, and more time bouncing between apps instead of shipping work.

Tools Have Strengths and Limits

Different AI tools shine in different contexts. Some are fast and versatile, others dig deep but are slower. Some bring real-time info into your workflow, others excel at automation or visuals. None replace judgment.

Here’s how to think about them:

General assistance tools help with writing, summarizing, and brainstorming. They reduce friction in everyday tasks but sometimes make confident mistakes that need an operator’s verification.

Reasoning-focused tools handle long documents, complex analysis, and strategy work. They are slower but useful when accuracy matters.

Workflow-integrated tools provide real-time context and connect to your data stack. Essential for research and operational clarity but not deep reasoning.

Productivity automators excel in spreadsheets, decks, or structured docs. Huge time savers if the team actually uses them.

Creative or visual tools help with branding or presentation assets. Great visuals do not hide weak strategy or unclear direction.

Knowing these limits prevents hours wasted on tools that look shiny but don’t solve real problems.

What Good Looks Like in Practice

Execution-focused operators settle into a small stack that addresses the actual work they do. A pattern that works looks like this:

A general-purpose assistant for writing, quick analysis, and brainstorming.

A reasoning-oriented tool for long form thinking and strategy.

A workflow-integrated tool for real-time info and data context.

Tools that automate recurring tasks like presentations, docs, or spreadsheets.

A visual tool for presentations, branding, or content assets.

The trick isn’t having all tools. It’s choosing a few that map to real tasks and embedding them into your workflow. Execution, integration, and habit make the stack effective.

For example, I use a general assistant to draft ideas and outline content, a reasoning tool to unpack complex strategy documents, and a workflow tool to pull in real-time data. Add a visual tool for presentation assets, and a multi-hour project can become a focused two-hour sprint. It’s not magic, it’s stacking tools with purpose.

Evidence From Real Startup Spending

If you want real insight into how operators choose tools, don’t look at vanity metrics like downloads or hype charts. Look at where money actually flows. Andreessen Horowitz (a16z) partnered with fintech Mercury to release the AI Application Spending Report based on transaction data from over 200,000 startups. The report identifies the top AI-native applications that startups are actually paying for.(implicator.ai)

The findings show:

Startups are spreading spending across many tools, not consolidating around one winner.(foundryradar.com)

Horizontal tools like general assistants, copilots, and productivity aides make up a big portion of real spend.(nomulabs.com)

Tools like OpenAI, Anthropic, and other assistants appear frequently, but developer and workspace platforms like Replit also show up high in spending, indicating diverse use cases.

Founders are not voting with clicks but with real budgets. They buy tools that help them ship work and build products, not tools that look good in a leaderboard.

Choosing Tools Like an Operator

A simple framework prevents tool sprawl and wasted effort:

Match the task — start with the problem, not the brand.

Check integration — will it plug into your existing systems without friction?

Consider adoption friction — can your team use it consistently?

Evaluate verification cost — how much extra checking is needed to trust outputs?

Measure leverage — does it free up execution time or just distract?

Operators succeed because they pick tools that reduce friction, not complexity. Even with AI, the most critical decisions are workflow design and execution discipline.

Execution Beats Experimentation

It is tempting to chase every new model, new launch, or hype cycle. Each experiment adds decision overhead and context switches. Operators do not chase hype. They build a stack that reliably gets work done and iterate on usage.

Treat AI tools as assistants with jobs, not magic fixes. That’s how real companies capture value. Research shows tools alone do not guarantee impact; integration, governance, and workflow design matter most.

Why This Matters Now

AI is in your workflow, content stack, corporate tools, and decision-making. For startups and career pivoters, this is an opportunity if approached deliberately. Tool sprawl and hype-chasing are silent productivity killers. Execution-focused operators cut through noise by picking tools that fit their work.

It’s the same lesson I wrote about in operational resilience in AI — survival comes from process, integration, and discipline, not chasing every new release. Pick a stack, understand its limits, and use it to amplify execution. That’s how operators win while everyone else chases hype.

📚Further Reading

AI Adoption Proves Its Worth – Global survey on AI usage and adoption challenges.

AI in Professional Services – Case study on adoption patterns in professional service firms.

AI in Marketing Strategies – How AI improves engagement and trend prediction.

AI Adoption Puzzle – Why AI usage is up but impact lags.

Generative AI in Energy – Real-world rollout and workflow integration insights.

AI Use Cases and Impact – Meta-study of efficiency and sustainable outcomes.?

TLDR

There is no single best AI tool — operators care about fit, not hype.

Tools alone do not fix problems, execution and integration do.

Pick a small stack that solves your core needs and embed it into your workflow.

Knowing where tools shine and where they break gives you leverage.

A simple framework for choosing tools beats chasing every new release.